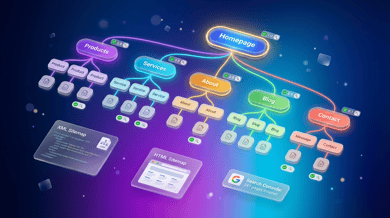

What is a Sitemap? How to Create, Submit an XML Sitemap, and Its SEO Effects

One topic that always appears in SEO explainers is the "sitemap." It's an important mechanism that conveys a website's structure accurately to search engines and makes crawling and indexing more efficient—but many practitioners still have questions like "Should I create an XML or HTML version?", "Does creating one actually have SEO impact?", and "What should I check after submitting it?" This article systematically explains sitemap fundamentals, types, SEO effects, how to create an XML sitemap, how to submit it to Google Search Console, and operational pitfalls.

A sitemap is a file or page that lists what pages exist on a website and how they are structured. Literally "a map of the site," it plays the role of letting you see the overall structure of a website at a glance.

Sitemaps broadly fall into two categories—"for search engines" and "for users"—with different purposes and audiences. A search-engine sitemap (XML sitemap) communicates site structure to crawlers like Google and Bing, and in the SEO context, "sitemap" usually refers to this one. By contrast, a user-facing sitemap (HTML sitemap) is a navigation page that helps visitors find the pages they're looking for.

Especially for large sites with many pages, media with high update frequency, and e-commerce sites with complex structures, running sitemaps properly improves crawlability (how easily the site can be crawled), which ultimately helps maximize organic search traffic.

There are several sitemap formats, each with its own use case. Let's organize the main types.

An XML sitemap is a file that tells search-engine crawlers the list of pages on a site along with each page's last-modified time, update frequency, and relative importance. The filename "sitemap.xml" is common, and it's written in the shared format defined by sitemaps.org. Major search engines—Google, Bing, Yandex, and others—support this specification, so in the SEO context, "creating a sitemap" almost always means creating an XML sitemap.

An HTML sitemap is a page that aggregates links to main pages for users who visit the website. Organizing and listing the site's category structure and article list makes it easier for users to reach the pages they want. The SEO effect is limited, but it has secondary benefits—improving usability, reinforcing internal-link structure, and helping crawlers discover deeply nested pages. The benefit grows with site size.

XML sitemaps also come with extension formats specialized for image, video, and news content. Image sitemaps target image search; video sitemaps convey video attributes (thumbnail, title, playback time, etc.); news sitemaps aim for inclusion in Google News. If your site has a lot of content centered on images, video, or news, preparing these dedicated sitemaps in addition to the standard XML sitemap broadens the opportunity for traffic from those search verticals.

Installing a sitemap doesn't directly raise search rankings, but it significantly improves the efficiency of crawling and indexing, which form the foundation of SEO. Let's organize the specific effects.

Search engines crawl websites by following links, but pages that are hard to reach via internal links or are deeply nested become harder to discover. Because a sitemap directly presents the crawler with a list of all pages on the site, pages that might be missed relying on link structure alone are reliably recognized. As a result, crawl budget (the crawl resources a search engine allocates to one site) is used more efficiently on important pages.

To appear in search results, a page needs to be registered in Google's index (database). Being listed in a sitemap doesn't guarantee indexing, but it's an important signal that tells the search engine the page exists. In particular, for newly created pages and articles hard to reach from existing links, including them in a sitemap tends to shorten the time to discovery and indexing.

Because XML sitemaps let you list each page's last-modified datetime (lastmod), crawlers can more easily identify pages updated since the last crawl. For owned media and news sites that update content frequently, updates get reflected in search results faster, which is advantageous for keywords where freshness matters.

Google's official documentation explains that sitemaps are particularly effective for sites with many pages, sites with deep archives, new sites with few external links, and sites with lots of rich content like videos and images. Conversely, small sites with only a few dozen pages and well-organized internal-link structures can still be crawled without a sitemap. Because setup cost is low, however, preparing one regardless of scale is the baseline approach.

From here, we explain in concrete terms how to create an XML sitemap—the format most used in practice. The order is: first decide "what to include," next understand the format, and finally pick a creation method.

The principle is to list only "canonical URLs" you want displayed in search results. Specifically, include public pages that receive organic traffic—top page, service pages, article pages, category pages, and so on.

On the other hand, pages tagged noindex, pages whose canonical points to a different URL, 404 pages, pages in the middle of a redirect, and private member-only pages should not be included. Mistakenly listing them causes errors like "Discovered – currently not indexed" or "Page with redirect" to show up in Search Console, adding noise to your site's overall evaluation.

XML sitemaps are written following the sitemaps.org specification. At minimum, you list each page's info in <url> tags inside a <urlset> tag—a simple structure. One file has a limit of 50,000 URLs or 50MB (uncompressed); if you exceed this, split the sitemap and separately prepare a "sitemap index" (a file that bundles multiple sitemaps). URLs must be absolute URLs and encoded in UTF-8—those are the rules.

You can write four elements for each URL in an XML sitemap. loc is the page's URL and is required. lastmod is the last-modified date (W3C Datetime format); changefreq is the update frequency (always/hourly/daily/weekly/monthly/yearly/never); priority is the relative importance within the site (0.0–1.0).

Note that Google has officially stated it effectively ignores changefreq and priority, and treats lastmod only as reference information if it's not accurate. In practice, the mainstream stance is to focus on "outputting an accurate lastmod" while not writing changefreq and priority (or not expecting much from them if you do).

There are broadly three ways to create an XML sitemap. The first is using the built-in feature or a plugin of a CMS (like WordPress). On WordPress, the native feature from 5.5+ or plugins like "XML Sitemaps," "Yoast SEO," or "Rank Math" automatically update the sitemap every time an article is published or updated. Operational cost is lowest, and this is the recommended approach for most sites.

The second is using an automated sitemap-generation tool (web services like XML-Sitemaps.com). You just enter a URL and it crawls your site and generates a sitemap, making it suited to sites built with static HTML or sites not using a CMS. The downside is that you have to manually regenerate when page counts change, which makes it unsuitable for large sites.

The third is dynamically generating a sitemap tailored to your own system. For large, complex sites like custom-built e-commerce or headless-CMS setups, this approach offers the most flexibility. You end up implementing yourself: extracting public pages from the database, accurately embedding lastmod, splitting at 50,000 URLs each, and bundling them with a sitemap index.

After creating a sitemap, submitting it from Google Search Console to notify crawlers of its existence is standard practice. Submission itself takes just a few minutes, but doing the post-submission status check as a set is the key point.

Before submitting, upload the sitemap file to the server and directly access the URL (e.g., https://example.com/sitemap.xml) from a browser to confirm it displays correctly. It's also a prerequisite that the target site is registered in Google Search Console as a property with verified ownership. The target URL scope differs depending on whether you're using a domain property or a URL-prefix property, so know your own registration state.

Log in to Search Console and open "Indexing > Sitemaps" in the left menu. Enter the sitemap URL (the path after the domain, e.g., sitemap.xml) in the "Add a new sitemap" field at the top and click the "Submit" button—that's it. After submission, the entry is added to the "Submitted sitemaps" list; if the status shows "Success," it's been properly accepted.

It takes several days to several weeks for crawling to progress, so periodically check the "Discovered URLs" count and the indexing state. The Search Console "Pages" report lets you see which URLs discovered from the sitemap were actually indexed, which were not, and why (noindex, duplicate, soft 404, etc.). If many pages listed in the sitemap aren't getting indexed, it's a sign to suspect content quality or duplication issues.

In addition to submitting via Search Console, it's also recommended to list the sitemap URL in the robots.txt at the site root. Writing "Sitemap: https://example.com/sitemap.xml" in robots.txt lets you communicate the sitemap's location to crawlers like Bing and Yandex that aren't registered in Search Console. If you operate multiple sitemaps, you can also add more lines and list multiple.

Submitting to Search Console and listing in robots.txt aren't either-or—doing both is the standard best practice.

Sitemaps aren't "create and done"—they deliver more value the longer you run them. Let's go over common failure patterns seen in the field.

First is cases where noindex pages or 404 pages leak into the sitemap. Depending on CMS or template settings, private or deleted pages may automatically remain in the sitemap. It's important to check regularly whether warnings appear in Search Console.

Second is URL canonicalization mismatches. If your canonical tag specifies "www.example.com" but your sitemap lists "example.com," the inconsistent notation confuses crawlers and hurts indexing. Unifying HTTPS/HTTP, www-present/absent, and trailing-slash presence/absence is a major precondition.

Third is cases where the sitemap isn't being updated. Leaving a statically output sitemap alone means new articles aren't reflected and deleted pages linger. Use a CMS plugin or an automated-generation mechanism to build a system where the sitemap updates automatically in sync with content updates.

Fourth is cases where file limits are exceeded. Sitemaps over 50,000 URLs or 50MB (uncompressed) per file aren't processed. Large sites must prepare a sitemap index and split sitemaps by category or article type for management.

Fifth is cases where people misunderstand that "submitting a sitemap gets a page indexed." A sitemap is only a means of communicating a page's existence to the search engine—it doesn't guarantee indexing or rankings. Fundamentally, content quality, E-E-A-T, and internal-link structure must be in place first; understand that the sitemap is a supporting mechanism that delivers value on top of that foundation.

A sitemap is an SEO foundation measure that communicates site structure accurately to search engines and raises the efficiency of crawling and indexing. Understand the roles of the XML sitemap (for search engines) and HTML sitemap (for users), pick the creation method that fits your site's scale and structure, and operate submission to Google Search Console and declaration in robots.txt as a set. If you list only canonical URLs, keep lastmod accurate, and build a mechanism that auto-generates in sync with updates, the sitemap becomes a powerful weapon for quickly reflecting new pages and updates in search results. Start by checking your site's sitemap state in Search Console and auditing for errors, warnings, and whether the necessary pages are fully and not excessively included.

A systematic guide to video marketing: what it is, differences from text, static images, and live streaming, the five be...

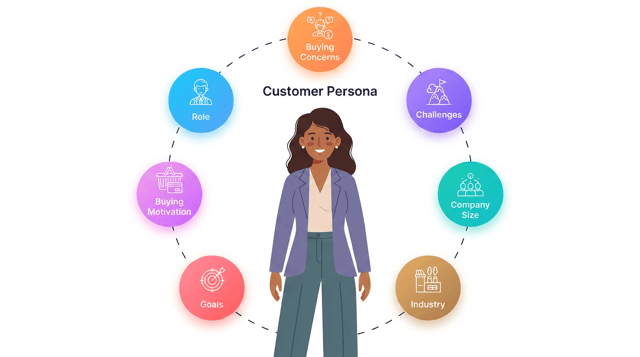

A systematic guide to persona marketing: what it is; how it differs from target, segmentation, customer journey, and ICP...

A comprehensive guide to LLMO (Large Language Model Optimization): what it is, how it differs from SEO, GEO, and AIO, th...