What Is robots.txt? A Guide to Syntax, Setup, and SEO Impact

"How exactly do I write a robot.txt file?" "Is the location fixed?" "What happens to SEO if I get the configuration wrong?"—these are common questions among technical SEO beginners and seasoned practitioners alike. Note that the official name is "robots.txt" (plural), but search engines also see queries like "robot.txt" and "robots txt," so this article uses the official name throughout. robots.txt is a plain-text file that tells search engine crawlers (like Googlebot) which URLs they may—or may not—crawl. It's one of the technical foundations of SEO.

This article covers everything from the fundamental concepts of robots.txt to syntax, file placement, use-case-specific examples, SEO impact, the AI crawler responses (GPTBot, ClaudeBot, Google-Extended) that grew in importance in 2026, validation and testing methods, and common mistakes. It's a practical guide for web managers, SEO specialists, and engineers searching for "robot.txt" or "robots.txt" who need to configure their site correctly.

To handle robots.txt correctly, you first need an accurate understanding of its role and the boundary between what it can and cannot do. Many SEO troubles stem from misunderstanding how robots.txt works.

robots.txt is a text file placed at the root directory of a website that tells search engine crawlers (robots), starting with Googlebot, which URLs they may or may not access. It's based on the Robots Exclusion Protocol (REP) proposed in 1994, which was formally standardized by the IETF as RFC 9309 in 2022. In practice, it suppresses crawling of areas that don't need to be indexed—admin panels, staging environments, search-results pages, parameter-laden URLs—so that crawl budget concentrates on important pages.

What robots.txt can do is purely "suppress crawler access to a URL." Specifically: restrict crawling to particular directories or files, restrict only specific crawlers, and notify the location of the sitemap (sitemap.xml). What it cannot do: First, it cannot remove already-indexed URLs from search results. Removal requires the noindex meta tag or the Search Console removal tool. Second, even URLs blocked by robots.txt may appear in search results as plain URL listings (no title or snippet) if they have many external links. Third, robots.txt is a "request"—malicious crawlers and scrapers may ignore it. It's not appropriate as a means of protecting confidential information.

Mechanisms commonly confused with robots.txt include the meta robots tag (noindex/nofollow) and sitemap.xml. Whereas robots.txt controls "crawl (access)," meta robots `noindex` says "crawling is allowed, but reject indexing in search results." If you tell robots.txt "don't crawl" a URL, Google can't access it—and therefore can't read the page's noindex directive—creating the contradictory result that the page may continue to appear in search results. To remove a URL from the index, do not block it in robots.txt; use the noindex meta tag or the X-Robots-Tag HTTP header instead. sitemap.xml is the inverse: it tells search engines which URLs to crawl. The standard practice is to use both, with the `Sitemap` directive inside robots.txt pointing to the sitemap.xml location.

The syntax is simple, built from a small set of directives. Below are the directives you should know at minimum, in order.

The User-agent directive specifies which crawler the following rules apply to. Use `User-agent: *` to target all crawlers; use `User-agent: Googlebot` to target a specific crawler by name. Major search-engine crawlers include Google's `Googlebot` (with `Googlebot-Image` for images and `Googlebot-Video` for video), Bing's `bingbot`, and Yandex's `YandexBot`. A single robots.txt can contain multiple User-agent blocks separated by blank lines. Crawlers follow the most specific matching rule, so if both `User-agent: *` and `User-agent: Googlebot` exist, Googlebot only follows the Googlebot block.

Disallow forbids crawling of the following path. `Disallow: /admin/` suppresses crawling of all URLs under `/admin/`. `Disallow: /` blocks the entire site, while `Disallow:` (no value) allows the entire site to be crawled. Allow creates exceptions inside a Disallowed scope. For example, `Disallow: /private/` blocks `/private/`, but `Allow: /private/public.html` lets that specific file be crawled. Paths support the wildcard `*` (matches any string) and the end-anchor `$` (matches the end of the URL). For instance, `Disallow: /*.pdf$` blocks crawling of all PDFs.

The Sitemap directive tells crawlers the absolute URL of your sitemap.xml. It doesn't belong to any User-agent block and is valid anywhere in the file. Example: `Sitemap: https://example.com/sitemap.xml`. If you have multiple sitemaps, you can repeat Sitemap lines. Even if you submit sitemap.xml directly through Search Console, listing it in robots.txt is recommended.

Crawl-delay specifies the interval in seconds between crawler requests, but Google has not officially supported this directive since 2019 and ignores it. Bing and Yandex still honor it, but to influence Google's behavior, use Search Console's "crawl rate" setting. Including Crawl-delay does no harm, but understand that Google won't act on it. If your server is under load, server-side rate limiting and cache optimization are the realistic remedies.

Where and how you place robots.txt is strict. Misplacement makes the file completely ineffective.

robots.txt must be placed in the root directory of each host (domain + subdomain). For `https://example.com/`, robots.txt must be reachable at `https://example.com/robots.txt`. A file in a subdirectory like `/blog/robots.txt` will not be recognized by search engines. Subdomains (`blog.example.com`, etc.) are independent hosts and need their own robots.txt files. Protocol (http/https) and port number are also distinguished, so HTTPS-enabled sites must serve a proper HTTPS robots.txt.

robots.txt is plain text, with UTF-8 encoding recommended. A BOM (Byte Order Mark) can break parsing for some crawlers, so save without a BOM. Google reads up to 500 KiB (about 512,000 bytes) of robots.txt; anything beyond is ignored. In practice, the file fits within a few KB to tens of KB, so the limit rarely matters. Both CRLF (Windows) and LF (Unix) line endings work; LF is recommended to match web-server conventions. Comments use `#` to end of line and are useful for documenting rules and operational notes.

WordPress often generates robots.txt dynamically via themes or plugins, and SEO plugins like Yoast SEO and All in One SEO let you edit it directly. To use a physical file, place robots.txt at the WordPress root (the same directory as `wp-config.php`). In Next.js, you can place a static file at `public/robots.txt` or generate dynamically with `app/robots.ts` or `app/robots.js`. The best practice for dynamic generation is to swap robots.txt content between production and staging via environment variables. In a headless setup like Payload, place robots.txt in the public folder of the frontend (Next.js, etc.). With cloud delivery (CloudFront, Vercel, Netlify), confirm that the file is served correctly at the edge.

Below are typical robots.txt patterns by use case. Use them as a copy-paste base.

The simplest case: open the entire site to every crawler. Use `User-agent: *` and `Disallow:` (no value), and announce the sitemap. Concretely, three lines: a User-agent line targeting all crawlers, a Disallow line with an empty value indicating no blocks, and a Sitemap line announcing `https://example.com/sitemap.xml`. This is the most basic initial setup for a new site.

To exclude WordPress admin (`/wp-admin/`) or internal admin directories (`/admin/`, `/staging/`) from crawling, list them under Disallow. Under `User-agent: *`, list `Disallow: /wp-admin/`, `Disallow: /admin/`, and `Disallow: /staging/`. For WordPress, `/wp-admin/admin-ajax.php` is needed for site functionality, so the standard pattern is to add the exception `Allow: /wp-admin/admin-ajax.php`.

On-site search-results pages (`/search?q=...`) and URLs with filtering or sorting parameters are low-value duplicate content for search engines and should typically be excluded from crawling. Examples: `Disallow: /search`, `Disallow: /*?sort=`, `Disallow: /*?filter=`. Wildcards bundle parameter-laden URLs into a single block. This optimizes crawl budget and improves crawl frequency for important pages.

To control crawling of specific file types, use wildcards and end anchors. To block all PDFs: `Disallow: /*.pdf$`. To block a specific image directory: `Disallow: /private-images/`. If you depend on image SEO or FAQ rich results, blocking images reduces visual exposure on results pages. Don't block lightly—check Search Console to see whether images are contributing to traffic before deciding.

As of 2026, it's common to block generative-AI crawlers (training crawls) like ChatGPT and Claude when you don't want your content used as training data. To block OpenAI's `GPTBot`, Anthropic's `ClaudeBot`, Google's `Google-Extended`, or Common Crawl's `CCBot` individually, create a User-agent block for each and list Disallow rules. For example: `User-agent: GPTBot` + `Disallow: /` blocks GPTBot site-wide, while `User-agent: Googlebot` + `Disallow:` (no value) keeps Google Search crawling allowed. As discussed below, AI-bot policy is a major theme in 2026 technical SEO.

robots.txt is a double-edged sword: configured correctly it helps SEO; configured wrong it can erase your search equity overnight. We summarize the SEO impact along three angles.

Google allocates a "crawl budget" to each site—the maximum number of URLs it will crawl—and this matters especially for large sites. By blocking low-value URLs (search-results pages, parameterized URLs, admin pages) in robots.txt, you concentrate crawl resources on important pages and accelerate indexing of new and updated pages. On large e-commerce or media sites with tens of thousands of pages, robots.txt optimization alone has been reported to lift organic traffic by 10–20%. The larger the site, the bigger the SEO impact of good robots.txt design.

The scariest robots.txt mistake is accidentally blocking important pages or the whole site. The most common case: writing `Disallow: /` (block entire site) for staging and shipping it to production—the entire site disappears from search results. Blocking CSS or JavaScript files also hurts: Google can't render the page properly, harming Mobile Friendly and Core Web Vitals evaluations. Since 2014 Google has strongly recommended allowing CSS/JS, and there is normally no reason to block them. Always validate robots.txt changes with the Search Console robots.txt tester before deploying to production to confirm no unintended URLs are blocked.

To repeat: robots.txt is not a tool for "removing from the index." Blocking already-indexed URLs in robots.txt only prevents Google from accessing them—the URLs themselves stay in the index, and with enough external links may continue to appear in search results without titles. To remove a specific page from search results, do not block it in robots.txt; instead place `<meta name="robots" content="noindex">` on the page or return `X-Robots-Tag: noindex` as an HTTP header. Google must crawl the target page and read the noindex directive in order to remove it.

From 2023 onward, generative-AI services have crawled web content extensively as training data, expanding the role of robots.txt from "instructions to search engines" to "a declaration of AI-training permissions." In 2026 it's an indispensable topic in technical SEO.

Notable AI crawlers include OpenAI's `GPTBot` (training crawl for ChatGPT), Anthropic's `ClaudeBot`, and Google's `Google-Extended`, which Google operates separately from its search crawl for AI training. These differ in purpose from search-engine crawls, and blocking them is said to have no direct impact on search rankings. For example, blocking `Google-Extended` doesn't affect Google Search rankings—it only suppresses Google's generative AI (Gemini, etc.) from using your content for training. Other AI-related crawlers include `PerplexityBot`, `CCBot` (Common Crawl), `Bytespider` (ByteDance), `Amazonbot`, and `Applebot-Extended`. Setting allow/disallow individually per your policy has become standard.

Policy on AI crawlers depends on your content strategy. Media companies and news organizations whose content is the competitive moat tend to block AI bots wholesale to prevent unauthorized training. Companies prioritizing brand awareness and branded search may treat citation by generative AI as "zero-click branding" and allow AI crawlers. Citation in AI Overviews and conversational AI search builds awareness assets even without clicks. What matters is deciding the policy in light of business goals, and clearly declaring "AI crawlers allowed/blocked" in robots.txt. Operating ambiguously makes it nearly impossible to retract data already used for training when you change policy later.

Since 2024, there has been growing interest in "llms.txt," a complementary text file optimized for AI. It summarizes the site's content structure in a form LLMs can parse, placed as Markdown at `/llms.txt`. llms.txt is not a replacement for robots.txt but a complement: robots.txt controls "access permission," while llms.txt tells AI "site overview and where the main content is." Standardization is in progress and not all AI services support it as of 2026, but companies prioritizing visibility in AI search are adopting it early.

Always validate robots.txt after deployment. Use multiple methods to confirm you haven't blocked important pages.

The most reliable check is the Google Search Console (GSC) "Settings" → "robots.txt" report, which shows the robots.txt content Google currently recognizes, the latest crawl timestamp, and any errors or warnings. GSC's "URL Inspection" tool also shows whether a specific URL is being blocked by robots.txt. Make pre- and post-deployment checks habitual to avoid catastrophic mistakes.

The simplest check is to access `https://example.com/robots.txt` in a browser and visually verify the content. Confirm the HTTP status is 200, encoding is correct, and line breaks render properly. If you get a 404, the file may be misplaced or there may be a delivery configuration problem. Without a robots.txt, search engines treat the site as "crawl everything," which is not catastrophic but means you lose Sitemap notification and other benefits.

SEO audit tools like Screaming Frog SEO Spider, Ahrefs, Semrush, and Sitebulb can interpret robots.txt and simulate crawl behavior. Run a local crawl before deploying to production to comprehensively verify that no unintended URLs are blocked and only the intended URLs are. For large-site robots.txt changes, the 2026 best practice is to combine GSC URL Inspection with full-site simulation in these tools.

Below are the most common robots.txt mistakes and their remedies. Most can be prevented with pre-deployment review and testing.

The most common—and most damaging—mistake is shipping the staging `User-agent: *` + `Disallow: /` to production. The entire site disappears from the index in days, and organic traffic collapses to near zero. The fix: dynamically swap robots.txt by environment variable (full access in production, full block in staging); add automated checks to your deploy pipeline to catch `Disallow: /` reaching production; and include robots.txt verification in your post-deploy checklist.

Sites still have `Disallow: /css/` or `Disallow: /js/` from old SEO playbooks—this is counterproductive in modern SEO. If Google can't crawl CSS/JS, it can't render the page properly, hurting Mobile Friendly and Core Web Vitals. The fix is straightforward: remove the CSS/JS Disallows. Google's current guidelines explicitly state "allow access to CSS, JS, and image files."

Some sites still put a `Noindex:` directive inside robots.txt, but Google officially ended support in September 2019. It has no effect on indexing today. The fix: use the meta robots tag or X-Robots-Tag HTTP header for noindex. Return to the basic principle that robots.txt controls "crawl permission" and is separate from "index permission."

robots.txt paths match by prefix, so `Disallow: /admin` matches `/admin`, `/admin/`, `/admin/users`, and even `/admin-panel`. To block only what's under `/admin/`, append a trailing slash: `Disallow: /admin/`. Cases are also distinguished: `Disallow: /Admin/` does not match `/admin/`. Match the URL structure of your site exactly. For complex patterns, use the GSC robots tester or third-party robots validators to verify URL matching beforehand.

The Sitemap directive requires an absolute URL. A relative path like `Sitemap: /sitemap.xml` won't be parsed by crawlers. Always use a full URL like `Sitemap: https://example.com/sitemap.xml` with protocol and domain. Also, sites that migrated to HTTPS sometimes leave the http:// version in place. Match the URL to HTTPS to avoid confusing crawlers.

robots.txt is a plain-text file that tells search-engine crawlers "which URLs you may or may not access on this site." It's the most basic of the basics in technical SEO. With a small set of directives—User-agent, Disallow, Allow, Sitemap—you can control crawl behavior site-wide.

Always place the file at the domain root (`https://example.com/robots.txt`) as plain UTF-8 text, with separate files for the HTTPS version and each subdomain. Setup differs by CMS (WordPress, Next.js, etc.); pick the method that fits your stack.

The SEO impact is double-edged: correct configuration optimizes crawl budget and accelerates indexing of important pages, while misconfiguration like `Disallow: /` reaching production or blocking CSS/JS can erase search equity. The key is rigorous validation on every change using the Search Console robots.txt report, URL Inspection, and third-party tools.

In 2026, responding to generative-AI crawlers (GPTBot, ClaudeBot, Google-Extended, etc.) is the new theme of robots.txt operations. The decision to allow or block AI training depends on content strategy and business goals, and explicit declaration in robots.txt has become standard. New AI-oriented complementary files like llms.txt are also emerging, broadening the scope of technical SEO.

robots.txt configuration is one of the technical pillars alongside content SEO and SEO measurement. With an integrated dashboard like NeX-Ray, you can continuously monitor Search Console crawl stats and organic-traffic trends, quickly catching the impact of robots.txt changes. Master the basic syntax, operational rules, and the new questions of the AI era to maintain a healthy foundation for your site's search visibility.

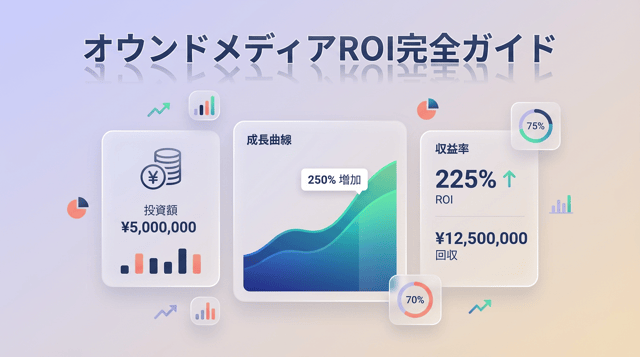

A complete guide for executives, marketing leaders, and owned media managers searching "owned media ROI" or "owned media...

A complete guide for executives and marketing leaders on "content marketing seo": the differences (goals, scope, time ho...

A complete 2026 guide to SEO benefits and performance measurement: what SEO benefits actually are (quantitative and qual...