What Is A/B Testing? Meaning, Benefits, and How to Implement It

When you need to objectively decide which variant of a marketing campaign or product experience truly performs better, A/B testing has become the standard tool of data-driven organizations. Amazon, Google, Netflix, and Microsoft run thousands to tens of thousands of A/B tests per year, and today everything from landing page conversion rates to ad creatives, pricing, and app UIs is decided by experiments rather than by instinct or the loudest voice in the room. This article covers what A/B testing is, its key benefits, how it differs from multivariate and split URL tests, the main areas you can apply it to, a five-step framework for running successful tests, and common pitfalls to avoid.

A/B testing is an experimental method in which you prepare an original version (A) and a modified version (B) of a web page, ad creative, email, or other experience, randomly split incoming users into two groups, and compare metrics such as conversion rate, click-through rate, or engagement to determine which version performs better—with statistical rigor. It is also called an A/B test, A/B testing, or split test, and at its core it is the application of the randomized controlled trial (RCT) methodology to digital marketing.

The key to A/B testing is random assignment. By randomly splitting users arriving during the same time period and through the same channels into two groups, you eliminate confounders such as the users who saw different pages happening to have different characteristics, and can causally attribute any observed difference to the change itself. Unlike simple before-and-after comparison (pre-post analysis), A/B testing is not distorted by seasonality, trend, or concurrent campaigns.

Familiar examples include comparing a green versus orange Buy button on an e-commerce site, comparing a feature-focused versus a benefit-focused hero headline on a landing page, or comparing a scarcity-based versus an authority-based copy on a display ad. As long as decisions are grounded in statistical evidence, even a long series of small changes compounds into meaningful improvement.

A/B testing has several variants, each suited to a different kind of question or change. Understanding the differences helps you choose the right approach for the situation.

A/B testing is the simple case of comparing one element across two variants. A multivariate test (MVT), by contrast, compares multiple elements at once in combination—for example, testing 2 headlines by 3 images by 2 CTAs as 12 simultaneous combinations. MVT captures interaction effects between elements, but it requires significantly larger sample sizes than a simple A/B test to achieve statistical significance, making it practical only for sites with substantial traffic.

A split URL test prepares variants A and B as separate URLs and redirects users to different pages. Whereas a standard A/B test swaps elements on a single URL, a split URL test is suitable when you are changing the overall structure or design of the page significantly. It is typically used for full redesigns, landing page template overhauls, and other large-scale changes that are difficult to achieve through DOM manipulation alone.

Surveys and user interviews are qualitative methods that collect users' stated opinions or self-reported preferences. A/B testing, by contrast, measures actual user behavior with quantitative data. It's common for a design that users say they prefer in a survey to underperform in real usage. The two approaches are not in conflict: interviews are ideal for generating hypotheses, and A/B testing is ideal for validating them quantitatively. Combine them deliberately.

A/B testing has grown more important as data-driven management has spread across companies. The underlying dynamic is simple: as marketing and product improvement options multiply, arguments about which option actually works cannot be resolved without evidence. Rising ad costs have shrunk room for CPA optimization, making the compounding of small, verified improvements a direct lever on LTV and ROI—another tailwind for experimentation.

The first benefit is objectivity in decision-making. Instead of deferring to the boss's taste, a designer's hunch, or the loudest person in the room, you can decide based on actual user behavior. This shortens the feedback loop and reduces unproductive internal debate. Booking.com and Amazon are well known for their discipline of deciding by data rather than by HiPPO (the Highest Paid Person's Opinion).

The second benefit is causal clarity. A before-and-after comparison can't cleanly separate the effect of a change from seasonality, but A/B testing compares two groups drawn from the same population during the same period, so any observed improvement can be attributed to the change with confidence. This causal clarity dramatically improves the quality of your next hypothesis.

The third benefit is early failure detection and risk containment. Rather than rolling out a new idea to all users at once, you expose a subset of users to it via A/B testing. If the change hurts performance, the damage stays limited; if it wins, you can safely roll it out to everyone. You gain a mechanism to steadily improve your product and marketing while keeping downside risk low.

A/B testing can be applied to virtually any user-facing touchpoint. Here are four of the most common target areas and typical elements to test within them.

The most common application is A/B testing on websites and landing pages. Test variables span hero headlines, main visuals, CTA button text, color, and placement, form field count, pricing display format, testimonial placement, and overall page length. Disciplined one-change-at-a-time testing has produced CVR improvements of tens of percent or even multiples across many industries.

On paid search, social ads, and display ads, creative A/B testing has become standard operating practice. You test headline, image or video, body copy, CTA, and targeting in parallel variants and compare them on CTR, CVR, CPA, and ROAS. Meta Ads and Google Ads both include mechanisms that automatically optimize delivery across multiple creatives, enabling efficient experimentation at scale.

In email marketing, you can A/B test subject lines, sender names, send times, opening lines, and CTA placement and copy. Subject-line A/B testing directly moves open rate and is built into most marketing automation platforms as a standard feature. For push notifications, you test message copy, send timing, and send frequency, optimizing for engagement rate while watching opt-out rate as a guardrail.

In SaaS and mobile apps, A/B testing is commonly used when releasing new features or changing UI. You test feature on/off, UI layout, onboarding flow, pricing plan display, and notification logic via feature flags that expose the new variant to a subset of users, measuring retention, paid conversion, NPS, and similar metrics. Netflix is famously known to A/B test even the thumbnail artwork for movies, treating experimentation as a core mechanism of product improvement.

You won't get results by spinning up two variants on a whim. Following the five-step framework below will give you statistically trustworthy results and repeatable learning.

Start by articulating why you are running the test and what success looks like. Pick one primary metric (CVR, CTR, ARPU, etc.) to judge by, and turn the idea into a hypothesis that includes the change, the mechanism, and the expected effect size—for example, "Changing the CTA button from green to orange will improve visibility and lift CVR by at least 5% relative." Tests without a clear hypothesis rarely translate into next actions even when they return results.

To draw statistically meaningful conclusions, you must calculate the required sample size up front. Set your baseline metric, Minimum Detectable Effect (MDE), significance level (typically 5%), and statistical power (typically 80%), and a sample size calculator will give you the required volume. Skipping this step leads to two classic failures: insufficient data to judge results, and premature termination—looking at interim results and stopping too early. Set the test duration to at least 1 to 2 weeks to smooth out day-of-week effects and user lifecycle variation.

Change only the one element you want to evaluate and keep everything else identical. Changing multiple elements at once makes it impossible to tell which change drove any observed difference. Implementation is typically done with dedicated tools such as Optimizely, VWO, AB Tasty, or Kameleoon, or, for in-product experimentation, feature-flag and experimentation platforms like LaunchDarkly, Statsig, or Eppo. After implementation, run QA to verify that user assignment and metric tracking are working correctly.

Once the test completes, compare the two groups on your pre-specified metric and judge whether the observed difference is due to chance or reflects a real effect. Confirm that your p-value falls below the significance threshold, or that the confidence interval excludes zero, and express the result as effect size with uncertainty—for example, "Variant B lifted CVR by 3-7% versus A (95% CI)." Also check for Sample Ratio Mismatch (SRM) and segment-level differences so you don't miss cases like wins overall but losing on mobile.

Based on the results, decide whether to roll out the winning variant to all users, run a follow-up test, or discard the idea. Crucially, don't stop at win or lose—record why you think the result occurred and what you learned. Building an organization-wide A/B testing knowledge base means similar hypotheses in the future can reuse past learning, and your success rate on tests compounds over time. Companies with mature experimentation cultures, such as Booking and Microsoft, publish past experiments and their learnings internally for all employees to reference.

A/B testing is powerful, but poor design or operation can lead to wrong decisions. Know the common pitfalls and avoid the traps.

The first is premature judgment due to insufficient sample size. Declaring "B is winning, let's ship it" a few days in exposes you to the peeking problem, where random noise is mistaken for a real effect. The rule is: decide the required sample size and duration up front, and do not look at the result until you hit that bar.

The second is changing multiple elements simultaneously. If variant B changed the headline, the image, and the button all at once, you won't know which change drove the win, so the result doesn't translate into transferable learning. Either test one element at a time or set up a properly designed multivariate test.

The third is missing segment-level heterogeneity. Even when a variant wins overall, it can lose with new users but win with returning users, behave differently on mobile versus desktop, or show impact only on specific traffic sources. Make it a habit to break down results by key segments, not just at the overall level.

The fourth is judging on short-term metrics alone. Aggressive CTA copy can lift short-term click-through or conversion, while silently hurting user satisfaction and long-term LTV. Where possible, monitor long-term guardrail metrics such as churn, LTV, and NPS alongside your primary metric.

The fifth is the novelty effect. Right after a UI change, numbers can rise simply because users are exploring something new, then revert to baseline a few weeks later. For UI changes that target active users in particular, either extend the test duration or analyze new users separately to avoid being fooled by novelty.

A/B testing is the practice of taking user touchpoints—web pages, ads, emails, product UI—and comparing the original against a modified version with random assignment, using statistical tests to determine which truly performs better. Through the three benefits of objective decision-making, causal clarity, and early failure detection, it forms the foundation of data-driven marketing and product improvement.

Success comes from disciplined execution of the five steps: clarifying goal and hypothesis, designing sample size upfront, changing one element at a time, running statistical and segment-level analysis, and turning every test into accumulated knowledge. Avoid the classic traps—insufficient sample size, multi-element changes, short-term bias, and the novelty effect—and keep stacking small, validated wins. Done consistently, A/B testing becomes one of the most powerful levers for accelerating both organizational learning and business growth.

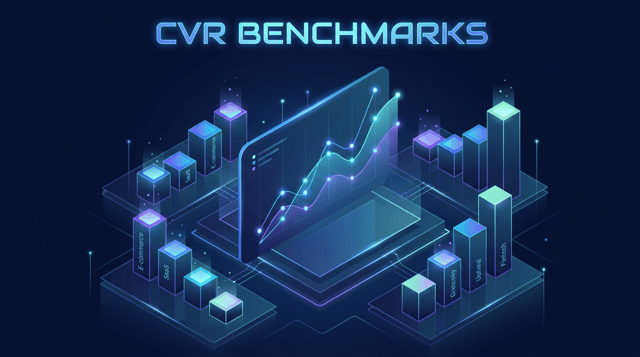

Industry CVR benchmarks compiled from the latest research to help you judge whether your conversion rate is reasonable. ...

A beginner-friendly explanation of what CRM is. Covers the meaning of Customer Relationship Management, the three senses...

A systematic guide to what AISAS is, its structural differences from AIDMA, its relationships with derivative models lik...