Marketing KPI Design: KGI, KPI Trees, and SMART Indicator Selection

To turn marketing activity from a vague effort into an investment that delivers results, you need to define your goals numerically and design a coherent system of metrics to measure how well you're achieving them. At the heart of this work is KPI design. By working backward from the marketing organization's ultimate goal (the KGI) to structure KPIs at a granularity the front line can act on every day, you bring clarity to priorities, budget allocation, and how responsibilities are split across teams.

This article walks through the fundamentals — KGI, KPI, and KSF — then covers how to build a KPI tree, how to apply the SMART framework when selecting indicators, the metrics most commonly used across marketing, and the pitfalls that derail KPI programs in practice. Whether you work in BtoB or BtoC, the goal is to give you a starting point for building KPI design that fits your situation.

The starting point for understanding KPI design is the relationship between three concepts: KGI, KPI, and KSF. A KGI (Key Goal Indicator) quantifies the organization's ultimate goal — overall business outcomes such as revenue, operating profit, or market share. A KPI (Key Performance Indicator) is a process metric that needs to be tracked in service of reaching the KGI; examples include leads generated, opportunity-creation rate, and site traffic. A KSF (Key Success Factor) is the qualitative answer to the question "what do we need to achieve to reach the KGI?" — directional strategic bets like "grow branded search volume" or "improve lead-to-opportunity conversion."

In practice the order is: define the KGI, articulate the KSFs in plain language, then translate those KSFs into measurable KPIs. Skip the KSF step and jump straight to listing KPIs, and the metrics on the floor lose their connection to the KGI — which is exactly how you end up in the classic failure mode of "every KPI is green but the KGI misses." KPI design looks like a numbers exercise, but the real work is almost entirely about cleaning up the strategic hypothesis that sits behind those numbers.

For a metric to function as a marketing KPI, it has to clear a few basic conditions. First, it must have a causal relationship with the KGI — you should be able to articulate why moving the KPI moves the KGI. Second, the front line has to be able to influence it; metrics that don't respond to anything the team does, no matter how diligently you track them, won't drive improvement. Third, you need a repeatable way to measure it — if the number changes depending on who's compiling it, the organization can't share it as a common reference.

KPIs that fail those three conditions can sit on a dashboard but won't help anyone make decisions. The mindset to adopt is "include it because it's directly tied to a result we want to move," not "include it because it's measurable." The more aggressively you curate the list, the sharper the front line's focus becomes.

Digital tooling has caused an explosion in measurable indicators — GA4, ad platforms, MA/SFA systems, BI tools — and data is being generated everywhere, every day. More information is a tailwind in principle, but staring at a forest of unorganized metrics actually slows decision-making and pushes teams into a "data-rich, insight-poor" state. KPI design is the way out of that trap: the process of deciding, as an organization, which signals you'll watch and which you'll deliberately ignore.

On top of that, the ongoing phase-out of third-party cookies and tightening privacy regulation is making it harder to track individual ad effectiveness with precision. That makes the ability to design from the upstream KGI down to the downstream KPIs in a structured way — and concentrate effort on the highest-leverage indicators — more valuable than ever. What's being tested today isn't the precision of any single number, but the discipline to choose the right indicators, aligned with the strategic hypothesis.

A KPI tree starts by decomposing the KGI as a formula tied to your business model. For an e-commerce business, that might be "revenue = visits x conversion rate x average order value." For a BtoB SaaS business, "ARR = new ARR + expansion ARR - churned ARR." For a subscription business, "LTV = average monthly revenue / churn rate." You translate the revenue mechanics specific to your business model into an equation. If this decomposition is sloppy, every downstream KPI will end up floating without a real connection to the KGI.

When decomposing, the rule is to keep the equation balanced under standard arithmetic. Don't blur things together additively — "revenue = traffic + service + product strength" — but instead break the formula down to multiplicative variables: "revenue = sessions x CVR x AOV." Once you can express the KGI as a product of variables, you can quantitatively simulate how much revenue moves when you move any one of them.

Once you have the variables from Step 1, expand each one horizontally along the marketing funnel stages. "Sessions" might break down into "organic search + paid + social + direct + email," and "CVR" into "product page view rate x add-to-cart rate x checkout completion rate." By subdividing along the customer journey from upstream to downstream, you build a tree where any funnel-stage problem has a clear location on the map.

When expanding horizontally, push it down to the unit your team can actually act on. Don't lump everything into "paid traffic" — split it into "Google Search Ads," "Meta Ads," "Yahoo! Ads," and so on, and go further down to campaign tiers like "branded keywords," "non-branded keywords," and "retargeting." That's what turns a tree into a set of KPIs that map directly to budget allocation and operational decisions.

Once the shape of the tree is in place, fill in current and target values at each node. Targets are derived backward from the KGI, with the math working bottom-up. For example, if the KGI is $12M in revenue and the average order value is $100, that's 120,000 orders for the year; at a 2% CVR you'd need 6 million sessions. Walking the math top-to-bottom this way tells you whether your current channel mix can realistically supply the sessions you need.

When the gap between current and target is extreme, that's a signal that either the target is unrealistic, or that the strategic premise (channel mix, AOV, CVR levels) needs a major rework. Instead of "we'll just double through sheer effort," you can have a concrete conversation about which variables to move and by how much. That shift — from willpower to specifics — is the single biggest payoff of putting numbers into the tree.

To make a numbered KPI tree actually move, assign each KPI a responsible owner and a monitoring cadence. The company-wide KGI is reviewed by leadership quarterly; upper-funnel KPIs (reach, sessions) sit with the marketing director on a monthly basis; channel-level details (CTR, CPA) are owned by operators and watched weekly or daily. Making this division of labor explicit prevents KPIs from becoming "someone else's problem."

It also helps to set a rule that reviews discuss not just "is this number good or bad?" but "so what do we do next?" — paired together. That alone shifts KPIs from numbers used for reporting to numbers used for improvement. Limiting the weekly review to three to five focal indicators while doing the wider sweep monthly or quarterly is also an effective way to vary granularity by cadence.

The S in SMART is Specific. KPI definitions need to be unambiguous enough that any reader interprets them identically. "Increase site visits" is too vague; "monthly unique users on our owned e-commerce site (GA4 baseline, bots excluded)" — specifying scope, measurement tool, and exclusion rules — is the level of definition you can actually operate against. Vague definitions surface as recurring debates in review meetings ("how did you pull this number?" "the definition was different last month") and steal time away from the improvement conversation that should be happening instead.

A practical way to lock in specificity is to maintain a one-page definition sheet per KPI: name, formula, data source, period, exclusions, owner. A single spreadsheet is enough, and just having it around dramatically reduces alignment drift. It also doubles as onboarding material for new team members and helps prevent KPI ownership from becoming personality-dependent.

The M in SMART is Measurable. A KPI only carries meaning if there's a system that captures it continuously and automatically. Indicators that require manual aggregation every month stop getting updated when things get busy and quietly become hollow. Before adopting a KPI candidate, verify whether it can be produced "with one click" from your existing measurement stack — GA4, ad platforms, SFA/CRM, BI tools — and only then proceed.

When considering a new KPI, weigh the cost of measurement against the insight it returns. If a metric takes dozens of hours to implement and ongoing effort to maintain, ask whether you really need that level of precision. If a substitute indicator can support 70-80% of the same decisions, simplifying measurement and redirecting that effort into actual execution usually produces more output for the organization overall.

The A in SMART is Achievable. The ideal target is "a stretch, but reachable through a realistic stack of levers." Targets you can hit easily breed slack; targets that are obviously out of reach generate a give-up mood early. Calibrating to "reachable if we push hard" — informed by past growth, market growth, competitor moves, and your resources (budget, headcount, channels) — is the practical setting that keeps KPI operations sustainable.

A useful way to test achievability is to enumerate the levers required to deliver the target. "To add 100 leads per month, we'd need two new whitepapers, a 30% ad budget bump, and one trade show." Once you can decompose the target into a sum of concrete moves, you can judge whether the resources actually support it. If the list runs out before the target does, either revise the target or change the strategy.

The R in SMART is Relevant. A KPI has to be a real contributor to KGI achievement. The classic failure is treating vanity metrics — social followers, raw PVs, like counts — as priority KPIs because they're easy to measure, even though their causal link to the KGI is weak. The number trends up while revenue doesn't, and you discover after the fact that you've spent valuable resources on the wrong things.

To enforce relevance, articulate a hypothesis for each KPI in the form "if this metric moves by X, the KGI moves by Y." Even something rough — "if branded search grows by 1,000 per month, site traffic gains roughly 800, and at a 2% CVR that's about $1,600 in additional revenue" — lets you debate KPI priority quantitatively. The hypothesis doesn't have to be perfect; what matters is the willingness to refine it as you operate.

The T in SMART is Time-bound. A KPI without a "by when" can't establish priority. Layering annual, quarterly, monthly, and weekly targets is what allows day-to-day decisions about "what to do this week" to ladder up to KGI achievement at year end. KPIs without deadlines fall into a permanent loop of "we'll get serious starting next month."

In practice, anchoring deadlines to your existing review cycles — quarterly or half-yearly — works best. Set a quarter-end target for each KPI, check progress monthly, and confirm that supporting initiatives are tracking weekly. Build that three-tier rhythm and long-term goals connect naturally to short-term action, and you structurally prevent the "oh no, we're going to miss" panic at quarter end.

At the top of the funnel, you measure how broadly your brand or product is reaching people. Typical indicators include ad reach, impressions, and frequency; social impressions and accounts reached; and signals like Google Trends or branded search volume that show how many people are actively looking up your brand. Awareness-stage KPIs are far from a purchase, so the right lens isn't immediate conversions — it's how much demand you're creating.

Branded search volume in particular has gained attention as one of the few quantitative ways to measure brand-building and content marketing impact. It's increasingly used to evaluate efforts like TV CMs and digital video that don't tie cleanly to direct conversions, and many organizations now track it as a number to grow steadily over the medium-to-long term.

After awareness comes a measurement of how many interested people actually engaged with your site or content. Typical examples include site sessions and unique users, ad click-through rate (CTR), and content metrics like time on page, scroll depth, and article completion rate. Here you evaluate both "how many showed up" and "how deeply they read once they did."

Interest-stage KPIs reflect both content quality and the targeting accuracy of your traffic sources. If sessions are growing but bounce rate is worsening, there's likely a mismatch between traffic source and value proposition, and creative or LP messaging redesign becomes a strong candidate lever.

At the consideration stage, you measure whether prospects are progressing into a state of "seriously considering purchase." In BtoB that means leads generated, MQLs (Marketing Qualified Leads), SQLs (Sales Qualified Leads), and opportunity-creation rate. In BtoC it includes add-to-cart rate, wishlist saves, free trial starts, and resource downloads. What matters here is watching both volume and quality — chasing volume alone tends to flood sales with low-quality leads and burn them out.

Representative quality indicators include lead-to-opportunity conversion and opportunity-to-deal conversion. Tracking how many leads progressed to opportunities and ultimately closed, broken down by channel and campaign, lets you distinguish "high-volume, low-quality channels" from "low-volume, high-quality channels." The principle is to allocate budget based on contribution to bookings, not raw lead counts.

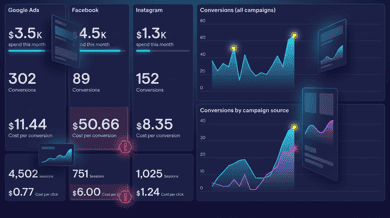

At the purchase stage, you measure conversion efficiency and revenue performance. Conversion rate (CVR), cost per acquisition (CPA), return on ad spend (ROAS), average order value (AOV), and per-order gross profit and gross margin are typical KPIs. ROAS and CPA can be tracked at the channel and campaign level and feed directly into daily ad operations decisions.

That said, evaluating ROAS or CPA on a last-click basis tends to underweight the contribution of upstream awareness efforts. Pairing in attribution analysis or marketing mix modeling (MMM) lets you assess channel contribution more fairly and invest in the channels that work over the long term. The modern standard is to view purchase-stage KPIs through both the "short-term efficiency" and "long-term contribution" lenses simultaneously.

After purchase comes loyalty: measuring whether customers keep transacting and recommend you to others. Typical indicators are customer lifetime value (LTV), repeat purchase rate, churn rate, and NPS (Net Promoter Score) and CSAT (customer satisfaction). As marketing strategies shift away from a pure new-acquisition focus toward LTV maximization, the importance of these indicators has risen accordingly.

In subscription and SaaS businesses, monthly churn rate and net revenue retention (NRR) are arguably the sharpest indicators of business health. An NRR above 100% means revenue can grow without acquiring any new customers — one of the metrics investors and executives weigh most heavily. Loyalty-stage KPIs sit closest to the executive KGI, and the levers that move them deserve the highest priority by design.

The most common failure is loading a dashboard with dozens of KPIs and declaring "all of them are important." The team can't tell where to focus, and the result is that everything gets done halfway. The rule of thumb: at most five KPIs in your weekly review, ideally three. Treat the rest as reference indicators that support, but don't drive, decisions.

When pruning, the criterion is the product of "impact on the KGI when moved" and "how much your team can actually move it." KPIs with both high impact and high actionability are where you concentrate scarce resources. Indicators with high impact but low controllability — overall market trends, for example — should be downgraded to reference signals. That's the right way to handle them.

A pitfall that surfaces constantly in digital advertising is over-reliance on last-click attribution. Customers typically touch multiple channels before converting, and assigning the entire conversion to the last click systematically underweights the channels that contributed at the awareness and consideration stages. Budget drifts toward retargeting and branded keywords, demand-creation investment thins out, and a downward spiral sets in.

The remedy is to view the data through multiple attribution models — first-click, data-driven, linear — side by side, or to use marketing mix modeling to estimate channel contribution statistically. There's no perfect answer, but evaluating from multiple angles structurally prevents the failure mode of blindly trusting last-click and starving the upper funnel.

When marketing, sales, and leadership work from different definitions and views of the same KPIs, discussions about the numbers don't connect, and decisions slow down. Take "lead": one team counts every form submission, another counts only those passing an attribute score filter, and the two are talking about completely different numbers. Establishing a shared KPI dictionary across the company — unified definitions and calculations — directly improves organizational productivity.

A practical way to drive shared language is to gather the relevant teams and spend a full day reviewing core KPI definitions in a "KPI workshop." Surface the existing inconsistencies, agree on unified definitions, and rebuild dashboards accordingly. Once done, the output lasts a long time, which makes it a high-value initial investment.

Over-prioritizing achievability and setting conservative targets pulls the organization into a comfort zone, and growth ends up trailing the broader market growth rate. "100% target attainment" looks reassuring, but in some cases it actually signals missed growth opportunity. Layering in stretch targets — ambitious goals where 70-80% attainment counts as success — and switching to a model where falling short still produces learning can fundamentally change the organization's growth curve.

When introducing stretch targets, be careful about how they connect to performance evaluation. If missing a target maps directly to a poor review, the team will only ever set safe targets. Separate "commit targets" (must-hit) from "stretch targets" (challenges), and let the stretch effort be evaluated even if the number isn't reached. That's how you preserve a healthy goal-setting culture.

The first step in operating KPIs as an organization is to build a one-page KPI sheet. A three-tier layout works well: KGI on top, primary KPIs (around five) in the middle, sub-KPIs (channel- and campaign-level details) at the bottom, with each KPI carrying definition, current value, target, attainment rate, owner, and a comment field. A Google Sheet is fine — design it for use in monthly reviews.

The advantage of a one-page sheet is that leadership, department heads, and the front line can all hold a conversation looking at the same screen. BI dashboards are useful but information-heavy, and discussions tend to scatter. Switching to a monthly-updated "financial statement"-style one-pager noticeably improves both the speed and the consistency of decision-making.

KPI operations don't drive results just by being watched. You need a review cycle that builds a rhythm of "see the number, decide the next action." Weekly reviews focus on operational metrics (daily ad CTR/CPA, lead generation); monthly reviews focus on the middle-tier primary KPIs and overall funnel progress; quarterly reviews examine the KGI and the validity of the strategic hypothesis itself. Granularity should vary with cadence.

An ideal time split for review meetings is roughly 1:3:6 across number reporting, interpretation, and next actions. Don't burn time reading numbers aloud; concentrate it on "why did the number land here?" and "so what do we do next?" That's how a culture grows in which KPIs lead to action.

KPIs aren't set-and-forget. They need regular review. Shifts in business phase (pre/post-PMF, growth, maturity), changes in the competitive landscape, the rise of new channels, and updated privacy regulations all justify recalibrating the structure and weights of your KPIs. A practical rhythm is a full KPI tree review once a year, or every six months at strategic inflection points.

When reviewing, apply the test "would removing this KPI hurt our decision-making?" to each indicator. If the answer is no, drop it without hesitation and slim down the dashboard. KPIs are harder to remove than to add, and a common observation among practitioners is that the organizations most willing to remove KPIs are the ones whose KPI operations are working best.

Marketing KPI design is the work of translating an abstract business goal (the KGI) into concrete indicators (KPIs) the front line can move every day. Clarifying the relationship between KGI, KPI, and KSF; decomposing the KGI along the business model; expanding along funnel stages; and refining individual indicators with the SMART framework — that sequence builds an organization where strategy and operations are connected by a single line.

The representative KPIs covered in this article — reach, branded search, sessions, CVR, CPA, ROAS, LTV, NPS, and so on — are an industry-common menu. What matters is choosing from that menu the ones that fit your business model, strategic hypothesis, and organizational structure, narrowing down to roughly five, and operating them faithfully over time. Avoid the pitfalls — too many KPIs, vanity-metric overweighting, last-click worship, definition drift across the organization — and put the operation on the rails of a one-page KPI sheet and a review cycle, and KPI design will reliably lift marketing results.

KPI design isn't about reaching perfection on day one; it's something whose precision improves while you run it. Start with a rough hypothesis, draft an initial KPI tree by decomposing the KGI, and put it into action. If this article serves as the companion guide for that first step, that's a successful outcome.

A practical guide to CRM and MA: their definitions and core capabilities, the differences in purpose, audience, data gra...

Understand the difference between MQL (Marketing Qualified Lead) and SQL (Sales Qualified Lead). Learn how to design sco...

A complete guide for web managers, SEO specialists, and engineers searching "robot.txt" or "robots.txt": fundamental con...